LuSNAR: A Lunar Segmentation, Navigation and Reconstruction Dataset

Abstract

LuSNAR addresses the critical gap in autonomous lunar exploration by providing a multi-task, multi-scene, and multi-label benchmark dataset. Unlike existing datasets that focus on single tasks, LuSNAR enables comprehensive evaluation of perception and navigation systems essential for next-generation lunar rovers. The dataset includes 108GB of synchronized multi-sensor data comprising high-resolution stereo camera images (1024×1024 at 10 Hz), dense depth maps, pixel-perfect semantic labels across five categories (regolith, craters, rocks, mountains, sky), 128-beam LiDAR point clouds with semantic annotations, and 100 Hz IMU measurements with ground truth trajectories. Nine diverse simulation scenes represent varied lunar terrain characteristics categorized by topographic relief and object density. Built using Unreal Engine with physically accurate sensor simulation, LuSNAR provides photo-realistic rendering and supports multiple research tasks including 2D/3D semantic segmentation, visual/LiDAR SLAM, stereo matching, and 3D reconstruction.

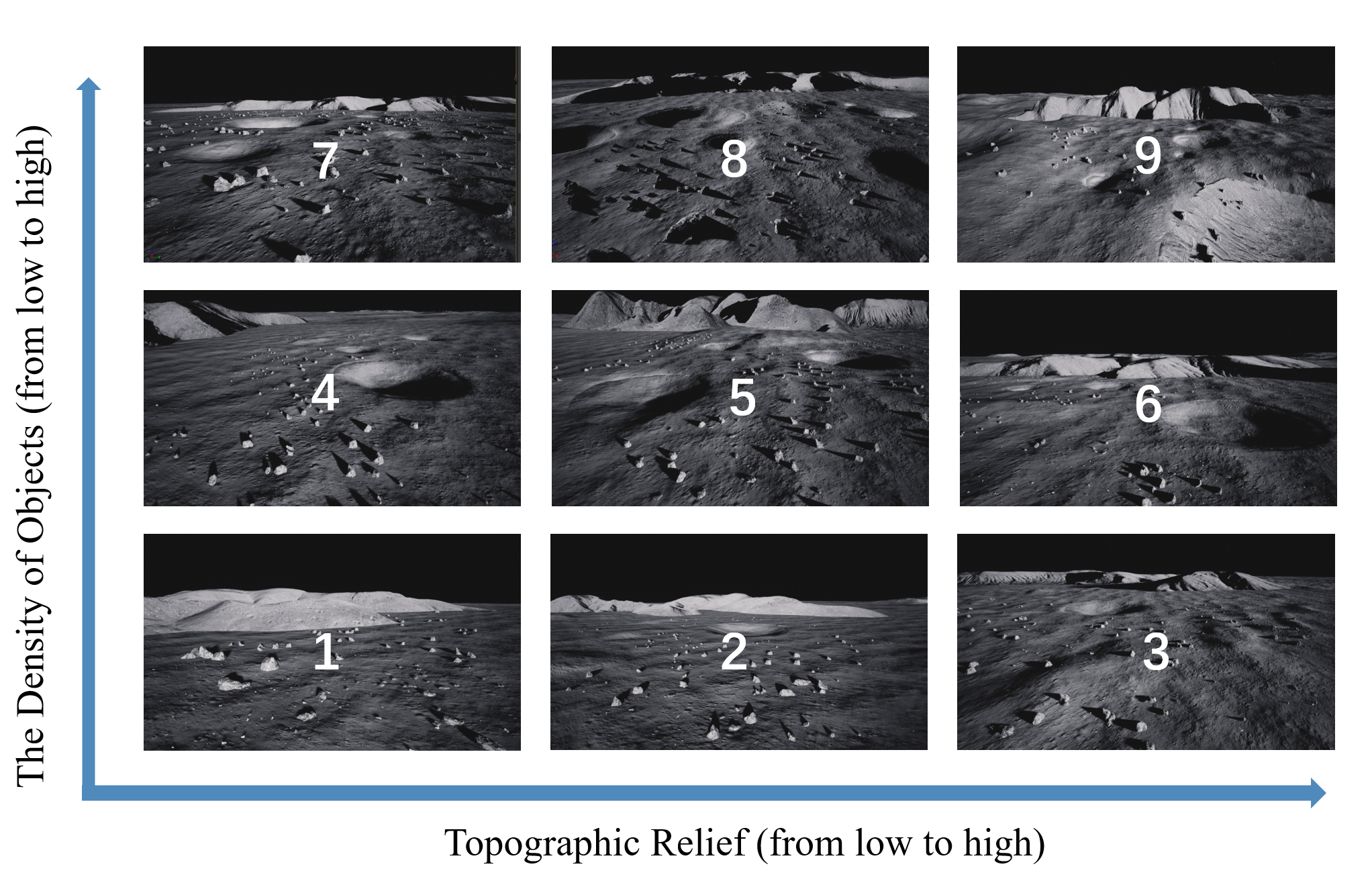

Nine simulation scenes with varying topographic complexity and object density.

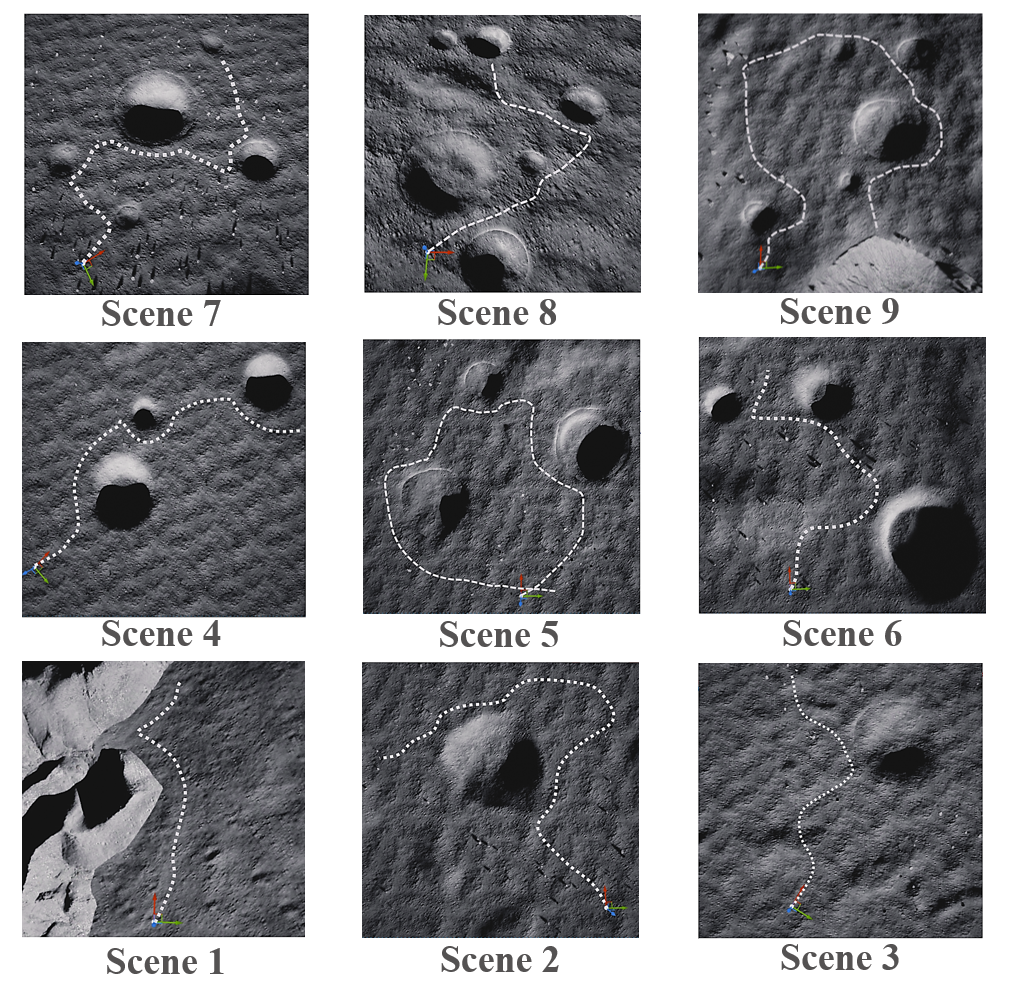

Sample trajectory visualization across different scenes.

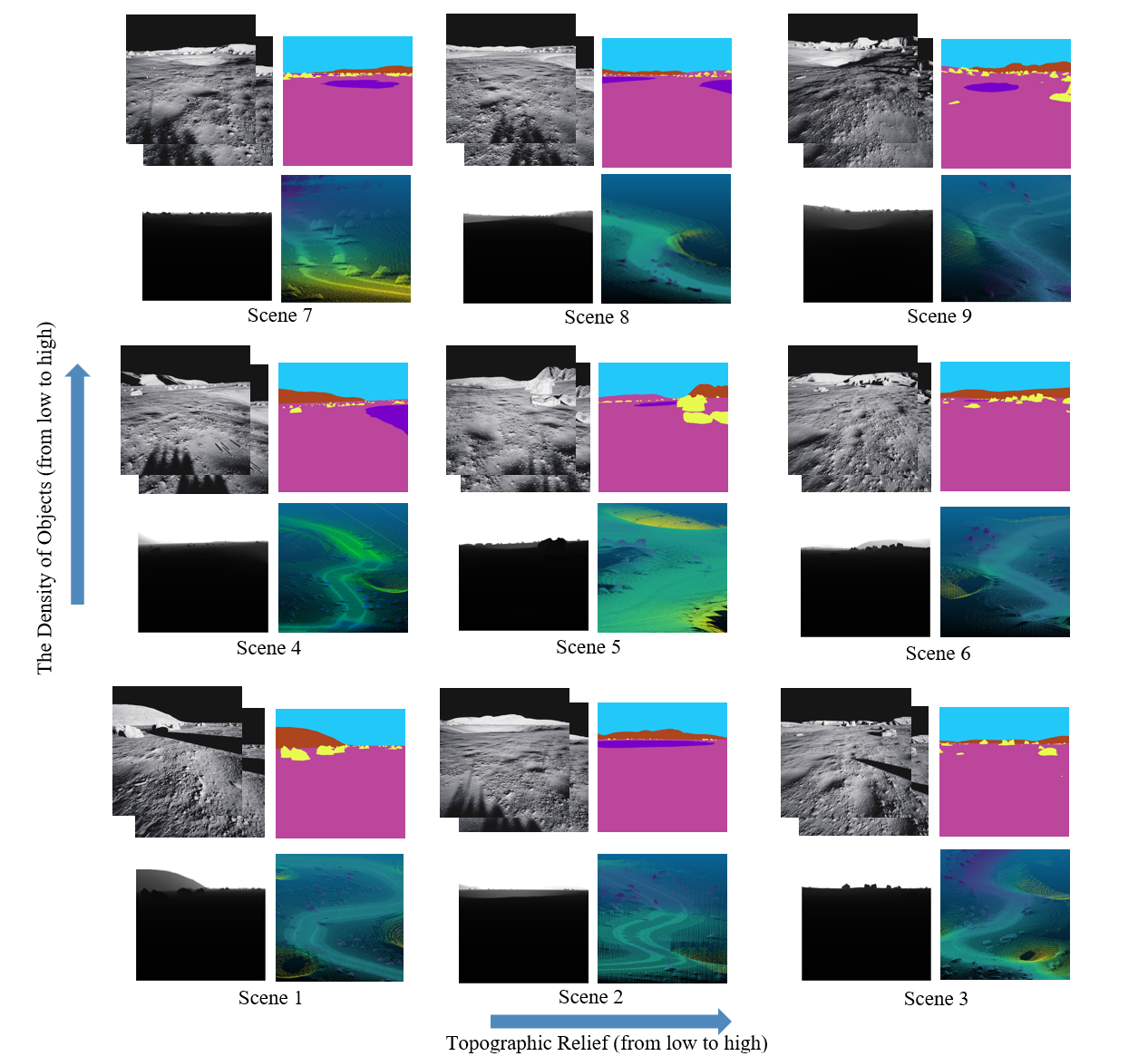

Multi-modal data visualization: RGB, semantic labels, depth, and LiDAR.

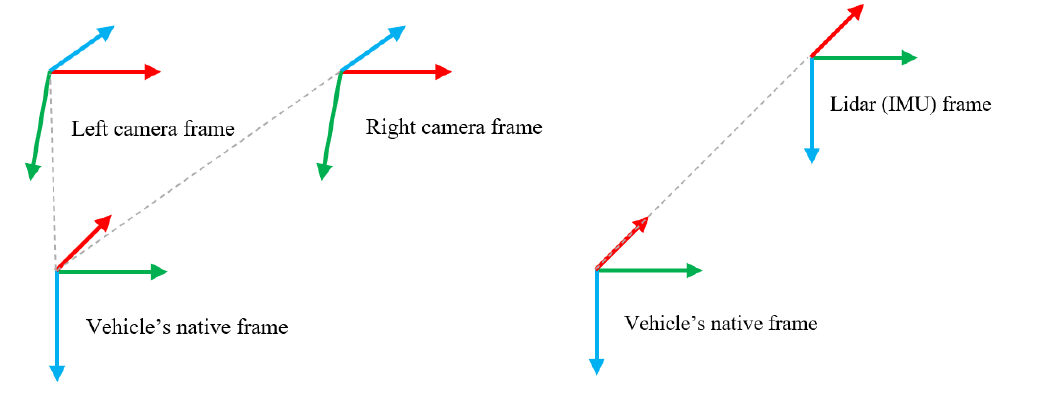

Sensor configuration and coordinate system definitions.

News

- [2024.09] 🎉 Paper submitted to TGRS and currently under review.

- [2024.09] 📝 arXiv v3 released with updated experimental results.

- [2024.07] 🚀 LuSNAR dataset and code publicly released.

- [2024.07] 📄 Initial preprint available on arXiv.

Highlights

- 🌕 First Multi-Task Lunar Benchmark: Comprehensive dataset supporting semantic segmentation, SLAM, and 3D reconstruction simultaneously.

- 📊 108GB High-Quality Data: Multi-sensor synchronized data including stereo cameras, LiDAR, and IMU across 9 diverse lunar scenes.

- 🎯 Pixel-Perfect Ground Truth: High-precision semantic labels, depth maps, and 3D point clouds with category annotations.

- 🏔️ Diverse Terrain Coverage: Scenes categorized by topographic relief and object density for robust algorithm evaluation.

- 🔧 Unreal Engine Based: Photo-realistic rendering with physically accurate sensor simulation.

Supported Tasks

Pixel-wise scene understanding

Point cloud classification

Camera-based localization

3D mapping and odometry

Depth estimation from stereo

Dense surface reconstruction

Dataset Statistics

| Component | Size | Details |

|---|---|---|

| Stereo Images | 42 GB | 1024×1024, 80°×80° FOV, 10 Hz |

| Depth Maps | 50 GB | Dense per-pixel depth |

| Semantic Labels | 356 MB | 2D masks + 3D point annotations |

| LiDAR Point Clouds | 14 GB | Up to 20M points/sec, semantic labels |

| IMU Data | - | 100 Hz, 6-DOF measurements |

| Ground Truth Poses | - | Sub-millimeter accuracy |

| Total Size | 108 GB | 9 scenes, multiple trajectories |

Dataset Structure

LuSNAR/

├── image1/ # Left camera

│ ├── RGB/ # Color images

│ ├── Depth/ # Depth maps

│ └── Label/ # Semantic labels

├── image2/ # Right camera (same structure)

├── LiDAR/ # Point cloud data

│ ├── timestamp1.txt # Format: x y z category

│ └── ...

├── Rover_pose.txt # Ground truth trajectory

└── IMU.txt # IMU measurementsSemantic Categories

2D Semantic Labels

| Category | Hex Code |

|---|---|

| Lunar Regolith | #BB469C |

| Impact Crater | #7800C8 |

| Rock | #E8FA50 |

| Mountain | #AD451F |

| Sky | #22C9F8 |

3D Point Cloud Labels

| Category ID | Category |

|---|---|

| -1 | Lunar Regolith |

| 0 | Impact Crater |

| 174 | Rock |

Sensor Specifications

📷 Stereo Camera

- Resolution: 1024 × 1024 pixels

- Frame Rate: 10 Hz

- Field of View: 80° × 80°

- Baseline: 310 mm

- Focal Length: 610.17784 pixels

📡 LiDAR

- Type: 128-beam spinning LiDAR

- Frequency: 10 Hz

- Horizontal FOV: 360°

- Vertical FOV: -25° to +27°

- Range: ≤30 m

- Point Rate: Up to 20M points/second

🧭 IMU

- Frequency: 100 Hz

- Axes: 3-axis accelerometer & gyroscope

- Accelerometer Random Walk: 0.002353596 m/s³√Hz

- Gyroscope Random Walk: 8.7266462e-5 rad/s√Hz

Download

Main Dataset (108 GB): CSTCloud Link (Password: fjZt)

BEV Data (Optional): CSTCloud Link (Password: jtk0)

BibTeX

@article{liu2024lusnar,

title={LuSNAR: A Lunar Segmentation, Navigation and Reconstruction Dataset based on Muti-sensor for Autonomous Exploration},

author={Liu, Jiayi and Zhang, Qianyu and Wan, Xue and Zhang, Shengyang and Tian, Yaolin and Han, Haodong and Zhao, Yutao and Liu, Baichuan and Zhao, Zeyuan and Luo, Xubo},

journal={arXiv preprint arXiv:2407.06512},

year={2024}

}